2026 March 09

When Developers Stop Writing Code: How AI Is Changing Engineering Workflows

Explore how AI in software development is reshaping engineering workflows, from coding automation and AI coding assistants to system architecture, scalable software design, and the future role of developers.

The software development landscape is in flux. AI in software development is no longer a novelty, but an integral engineering capability. Instead of hand-coding every feature, many teams now rely on AI coding assistants to handle repetitive tasks and generate boilerplate code. This shift means developers spend less time typing syntax and more time defining what a system should do rather than how it should be written. As one industry analysis notes, AI-generated code already accounts for roughly 30% of new code at Google and Microsoft, and companies like Hitachi report that 83% of their developers complete tasks faster with AI coding tools. In this new era, software engineering workflows must evolve to leverage AI, and the role of engineers is expanding from writing lines of code to designing robust systems and workflows that can scale.

The Traditional Role of Developers

Historically, software engineers were hands-on coders. They wrote code manually, implemented features line by line, and debugged until the application worked. The typical workflow involved writing source code, running tests, fixing bugs, and repeating – often guided by version control and DevOps practices. In this model, productivity meant cranking out code and fixing errors. Teams followed established software engineering workflows and managed deployments through CI/CD pipelines, with little machine assistance beyond automated building and testing. Documentation and code reviews were also manual processes. Developer productivity was measured largely by the number of features delivered, lines of code written, or tickets closed, reflecting the effort of writing and maintaining code. This traditional cycle centered on the developer’s craft of coding, testing, and debugging – skills taught in every software engineering curriculum.

The Rise of AI Coding Assistants

Today, tools powered by generative AI are augmenting every stage of development. Modern AI coding assistants – including GitHub Copilot, Amazon CodeWhisperer, OpenAI’s Codex, and Claude – can suggest code snippets, refactor existing code, and even generate entire modules from a natural-language description. Rather than replacing coders, these AI development tools act as super-powered pair programmers. They handle routine tasks (like writing CRUD interfaces or boilerplate tests) and accelerate common workflows. For example, developers often spend 30–40% of their time writing repetitive code (interfaces, models, forms, validators, tests); AI can automate much of this work. AI assistants also help with documentation, generating README files and onboarding guides from code, thereby improving knowledge transfer and maintainability.

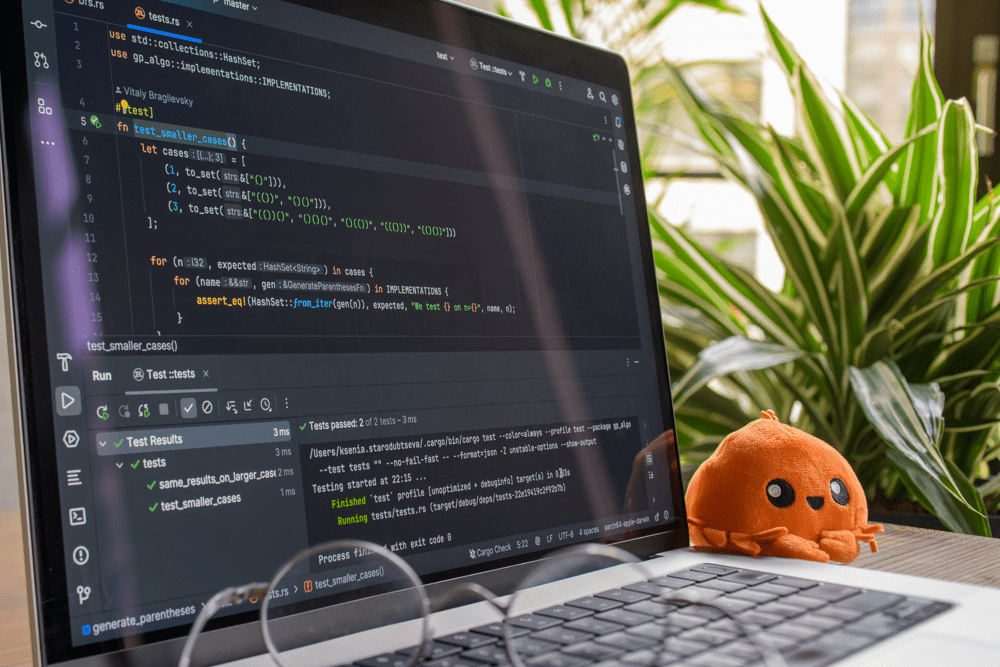

Integration into the IDE is seamless. Early on, teams turned to ChatGPT or Google to search for code snippets; now in-IDE agents provide context-aware suggestions. Industry leaders report dramatic efficiency gains: organizations including Microsoft, Google, and Morgan Stanley are embedding AI in development pipelines. GitHub Copilot can autocomplete functions based on docstrings, and newer AI agents like Cursor or Windsurf can navigate entire codebases, making coordinated edits across multiple files. These agents can even undertake full-stack tasks: from setting up server endpoints to generating frontend components and cloud configurations, all from simple prompts. In one case a well-crafted prompt produced thousands of lines of code in seconds, illustrating how AI coding tools can multiply developer effort. This transformative impact means AI is rapidly becoming part of standard engineering toolchains, reshaping the roles of developers on teams.

As AI coding assistants proliferate, development speed is rising sharply. Top teams now use multiple AI agents in parallel to further multiply their impact. In practice, some engineering organizations see 20×–100× increases in feature output compared to traditional workflows. Even mundane tasks like UI tweaks or refactoring can be done via prompts or by attaching screenshots. AI can auto-generate unit tests, integration tests, or CI/CD pipeline scripts. Documentation gets auto-written, and even architecture diagrams can be sketched from descriptions. These tools enable developers to bypass tedious coding routines and focus on higher-level work. As one engineering lead said, “We are seeing a huge step change in the way coding and software development is done – not just generating code, testing it, and deploying it, but our ability to run systems in production”.

When Developers Stop Writing Code

With AI shouldering more of the implementation, the developer’s craft is shifting. Today’s engineers spend less time on syntax and more on system design, architecture decisions, and workflow orchestration. Instead of writing every function, developers now define requirements and guardrails for AI. They craft precise prompts, outline data models, and set coding styles that the AI should follow. Senior engineers may find themselves reviewing AI-generated “pull requests” rather than writing the code themselves. In essence, developers become editors or directors of AI output: overseeing and refining code rather than hand-coding it.

This evolution also democratizes certain aspects of development. Non-technical product experts can prototype ideas by writing natural-language prompts, then working with engineers to guide the AI’s output. For greenfield projects, companies might assign small “AI-first” teams to explore rapid prototyping. In product development, AI can quickly spin up MVPs, run experiments, or even simulate how features behave under load, accelerating feedback loops. Meanwhile, experienced engineers pivot toward planning: choosing scalable architectures, setting up build and deployment workflows, and ensuring design alignment across the system. In short, writing raw code is giving way to orchestrating the entire development process.

System Thinking Becomes the Core Skill

As the hands-on coding work diminishes, systems thinking is emerging as a critical competency. Software is no longer just lines of code; it’s part of a broader complex system involving data flows, APIs, microservices, user interactions, and organizational processes. Developers must now think in terms of elements, interactions, and purpose — the three pillars of a software system. Instead of implementing features in isolation, engineers define the purpose of each component, how they interact (APIs, messaging, integrations), and what the elements (modules, services, teams) will be. This big-picture perspective ensures that AI-generated code fits coherently into the larger architecture and meets business goals.

Systems thinking also means aligning technical and organizational architecture. As Conway’s Law teaches, the structure of your software often mirrors your team structure. Agile engineering leaders recognize that redesigning a system is as much about reshaping team communication and DevOps practices as it is about technology. For example, teams might reorganize around service boundaries or platform ownership to streamline AI-enhanced development. Understanding software architecture design thus requires knowing trade-offs: when to shard a system, how to balance consistency vs. performance, or what boundaries to set for scalable software architecture.

In this AI-augmented world, developers collaborate closely with AI as a partner. The process often looks like: define a system’s purpose, prompt the AI to propose components or diagrams, review and refine those proposals, and iterate. AI tools can generate design options (e.g. “Should we use microservices or a monolith for this feature?”) and even produce UML or architecture diagrams from descriptions. But the AI doesn’t decide – it merely lays out possibilities. Human engineers must evaluate scalability, security, and maintainability implications. As one industry perspective notes, AI can enumerate pros and cons (monolith vs microservice) but lacks the domain context to pick the right solution. Thus the core skill becomes strategic judgment: choosing the patterns and system architecture that truly serve the product’s needs.

Systems thinking is also iterative. Developers define interactions and run AI-generated prototypes, then test and refine. The loop of “define, generate, test, refine” happens much faster. AI can simulate behaviors, run test cases, or identify potential failure points. Over time, the system evolves through this human-AI feedback cycle. But it always starts with a clear concept of the system’s purpose. Engineers articulate goals and constraints to the AI, guiding it to produce aligned results. In this way, development shifts from coding details to shaping system behavior. Those who excel will be the engineers who think holistically: understanding distributed systems, integration patterns, and organizational dynamics as they guide the AI.

Architecture Matters More Than Code

When AI can churn out code, the architecture — the high-level design of how components fit together — becomes paramount. Reports on AI-generated code consistently note a lack of architectural judgment. A recent study found AI-generated code to be “highly functional but systematically lacking in architectural judgment”. AI tends to produce boilerplate solutions: it follows textbook design patterns, bakes in global variables, or over-engineers by adding unnecessary layers. Without careful oversight, different parts of a system might be scaffolded differently by separate prompts, leading to inconsistent designs. For example, one AI call might set up a RESTful service, while another creates an MVC module, without a unified architecture plan. Engineers must intervene to enforce consistency and align each piece with the overall architecture.

Scalability and integration are also critical. Human architects must ensure that as AI generates more services or microservices, the system remains coherent. Scalable software architecture demands choices about data stores, caching, fault tolerance, and service orchestration that an AI alone cannot fully reason through. It’s the architect’s job to design reliable data pipelines, distributed systems, and network interactions that meet performance and reliability goals. An AI might suggest a trendy microservices approach because it’s common in training data, even when a simpler modular monolith would suffice. Only an experienced developer can weigh non-functional requirements – things like performance, latency, or cost – that an AI model doesn’t inherently understand. For example, an AI might design a chatty microservices solution with many network calls, but a human architect will catch the potential latency issues and propose alternatives.

Therefore, good architecture and system design are the work that developers focus on. Teams now value architects who can envision end-to-end solutions: how to decompose a system for scale, how to integrate cloud-native patterns, and how to evolve legacy systems. The resulting architecture must also support AI-assisted coding: it needs clear module boundaries, consistent APIs, and standardized libraries so that AI-generated code can be inserted cleanly. In sum, while AI writes code, human-led architectural vision ensures that the final product is maintainable, scalable, and aligned with business requirements.

At the same time, many of the technical details still matter. Developers must enforce coding standards and integration tests. DevOps practices evolve to include AI-driven quality checks: teams integrate AI code review tools that scan for security flaws and style issues. But ultimate responsibility remains human: every AI-generated pull request needs review. As one strategy suggests, treat AI output like junior developer code. Lead engineers guide the prompts (crafting them like instructions to a senior dev) and review the results. This ensures that as coding automates, best practices and security are enforced through human oversight. The design of the system and the discipline of the development process become the true measures of quality.

Risks of AI-Generated Code

Relying on AI for code comes with pitfalls. One major concern is reliability. AI models can hallucinate – confidently referencing non-existent libraries or functions. Studies show nearly one-third of AI-generated code snippets contain security weaknesses. Without scrutiny, these snippets could introduce vulnerabilities. Code quality also suffers if unchecked: AI may produce orphaned or redundant functions, overly complex logic, and inconsistent naming conventions. For instance, Ox Security’s analysis found that AI tends to follow “by-the-book” patterns and avoids refactoring, leading to bloated implementations that are hard to maintain. AI often adds edge-case code that developers might not have written, increasing the maintenance burden.

Maintainability is another risk. An AI-assisted project can quickly grow voluminous code, and if prompts evolve or models update (model versioning chaos), old code may become obsolete or incompatible. As one expert observed, organizations can go from “AI is accelerating development” to “we can’t ship features because we don’t understand our own systems” in under two years. Technical debt can compound: unlike human debt that builds gradually, AI-technical debt compounds exponentially. Factors include code bloat from repeated generation, fragmentation from teams using different AI tools, and evolving AI capabilities that outpace existing designs.

Security and compliance must be built in, not bolted on later. Traditional manual code review alone can’t keep up with AI’s speed. Firms now suggest baking security requirements into the AI prompts themselves, and using automated security scanners tuned for generated code. For example, prompts might explicitly request no use of insecure libraries or adherence to company encryption standards. Additionally, teams are creating “AI instructions” or rules files (like GitHub Copilot’s .copilotfile) to guide the AI’s style and security standards.

To manage these risks, engineering leaders apply guardrails. They set up automated tests and CI gates to validate AI code, and they maintain a “living documentation” mindset. Senior engineers mentor juniors on how to review AI output critically. The emerging view is that AI is best seen as “implementation support” – the AI writes code, but humans review and refine it. By treating AI like a member of the development team (one that needs oversight), teams can harness its speed while controlling quality.

The Future of Engineering Teams

The rise of AI in software development will shift team roles and skill sets. Traditional job titles will evolve. Many engineering teams may create dedicated AI Orchestrator or Prompt Engineer roles – specialists who design prompts and workflows for AI agents. These individuals act like project managers for AI: decomposing features into tasks and orchestrating multiple AI agents to build, test, and integrate those tasks. The concept of a “vibe coder” (a developer who is 100% prompt-driven) is emerging – essentially an AI prompt engineer or creative director. Such engineers will focus on defining high-level outcomes and reviewing AI work rather than writing code themselves.

Meanwhile, system architects will become even more important. As AI handles code, architects guide the overall design and ensure that software scales across distributed environments. Platform engineering roles will grow to manage the underlying infrastructure that supports AI-enabled pipelines and data flows. DevOps teams will incorporate AI-operated monitoring and auto-remediation tools. Product managers will learn to work with AI to prototype features quickly, iterating requirements in real-time with AI feedback. Even business or domain experts could contribute to development, as long as they collaborate with AI to flesh out ideas and engineers to validate them.

Despite these changes, engineers won’t be replaced. Senior developers and architects will still be needed to oversee production deployments and to tackle novel problems. Complex features and critical systems still require human creativity and judgment. But with AI coding tools, the same engineering team can deliver far more. The focus of engineering effort will gravitate toward higher-order problems: strategic design, performance optimization, security, and innovation. In this sense, software engineering remains “craftsmanship,” but the craft has shifted from writing code to sculpting intelligent systems. As one report puts it: “AI excels at implementation, human creativity remains irreplaceable for breakthrough innovation”.

In practice, the most successful teams will blend AI talents with human expertise. For example, a developer might prompt an AI agent to generate a prototype feature, review it, and then ask the AI to iterate on improvements. This cyclical human-AI collaboration model becomes the new norm. Over time, roles may specialize: some engineers will be builders who write little code but guide systems, others will be guardians ensuring quality, and new hybrids like platform engineers will merge infrastructure skills with AI savvy. The key is that every team member stays focused on solving user problems – the shifting workflows and tools simply change how that is done.

Conclusion

The era of AI-assisted development is reshaping software engineering in profound ways. Developers will not vanish, but their role will evolve. Instead of manual coding, they will spend more time on system design, architecture, and workflow orchestration. AI coding assistants and agents will handle boilerplate and routine code, enabling faster delivery of features. This transformation requires a shift in skills: mastery of system architecture, scalability, and integrations becomes as important as knowing a programming language. It also demands new processes for quality control and security to mitigate the risks of AI-generated code.

Ultimately, AI tools amplify human capabilities. When guided by skilled engineers, they accelerate development without sacrificing reliability. We will move toward a future where building software is more about defining what a system should do than spelling out how to do it. As one expert observes, this future is not about replacing developers but “reimagining their role to focus on strategic decision-making, creativity, and systemic insight”. In this new paradigm, the best engineering teams will be those that combine human judgment with AI efficiency. Their success will come from blending strong system thinking and robust architecture with innovative AI development tools – delivering software that is not just built faster, but built smarter.

Might be interesting for you

Mastering Advanced Patterns with React's Context API

Dive into advanced patterns with React's Context API to manage complex states and enhance your application's architecture.

Embracing PWA Trends for Outstanding User Experience

Progressive Web Apps (PWAs) are transforming the modern web. Learn how PWAs enhance user experience and engagement by combining the best features of web and mobile apps.

Simplifying Web Experiences with Vercel Innovations

Explore how Vercel's innovations simplify web experiences for developers by enhancing performance, facilitating collaboration, and streamlining deployment processes.